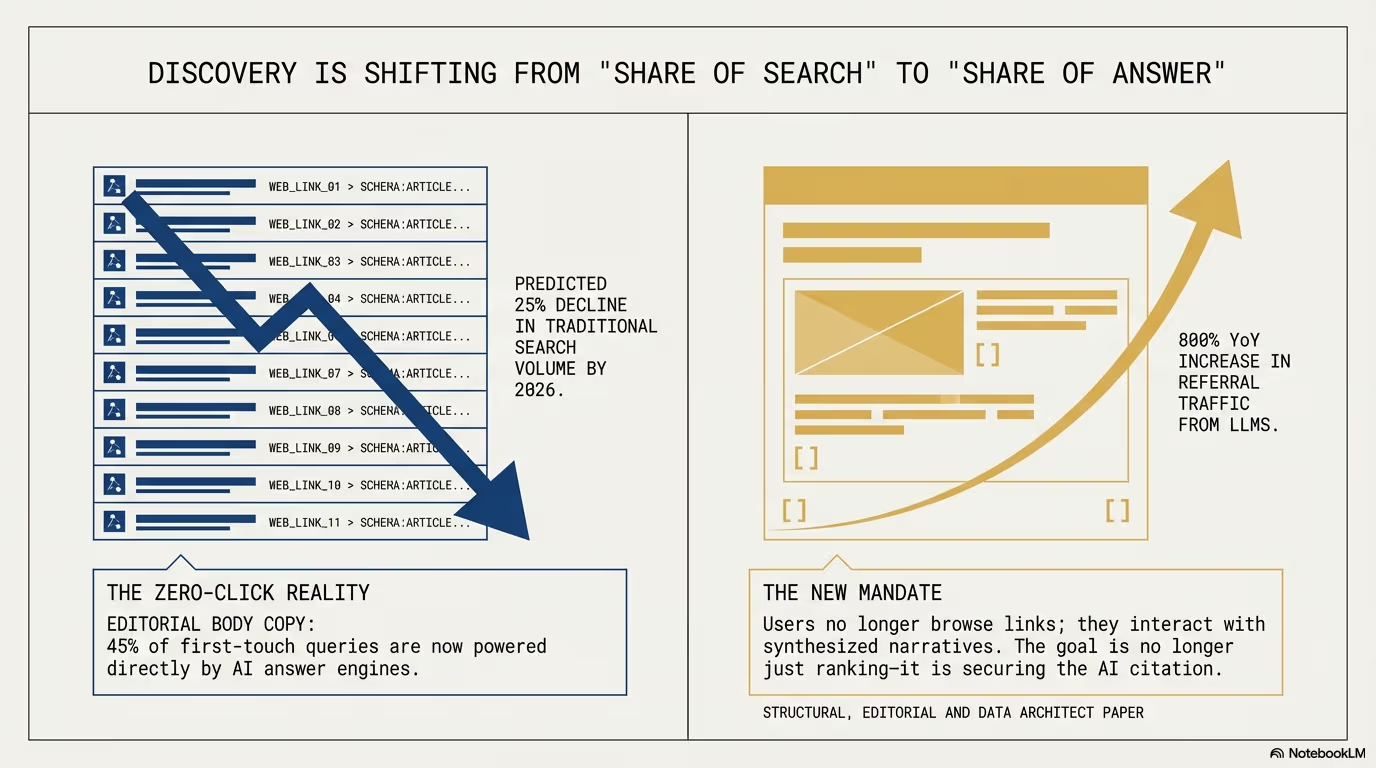

By 2026, AI search has replaced the blue link as the primary gateway to information. Gartner projected a 25% drop in traditional search volume, and with AI-referred traffic growing 527% year-over-year, these 7 truths define survival in the zero-click era. Understanding these principles is essential for any business that depends on organic visibility, because the rules of discovery have been rewritten from the ground up.

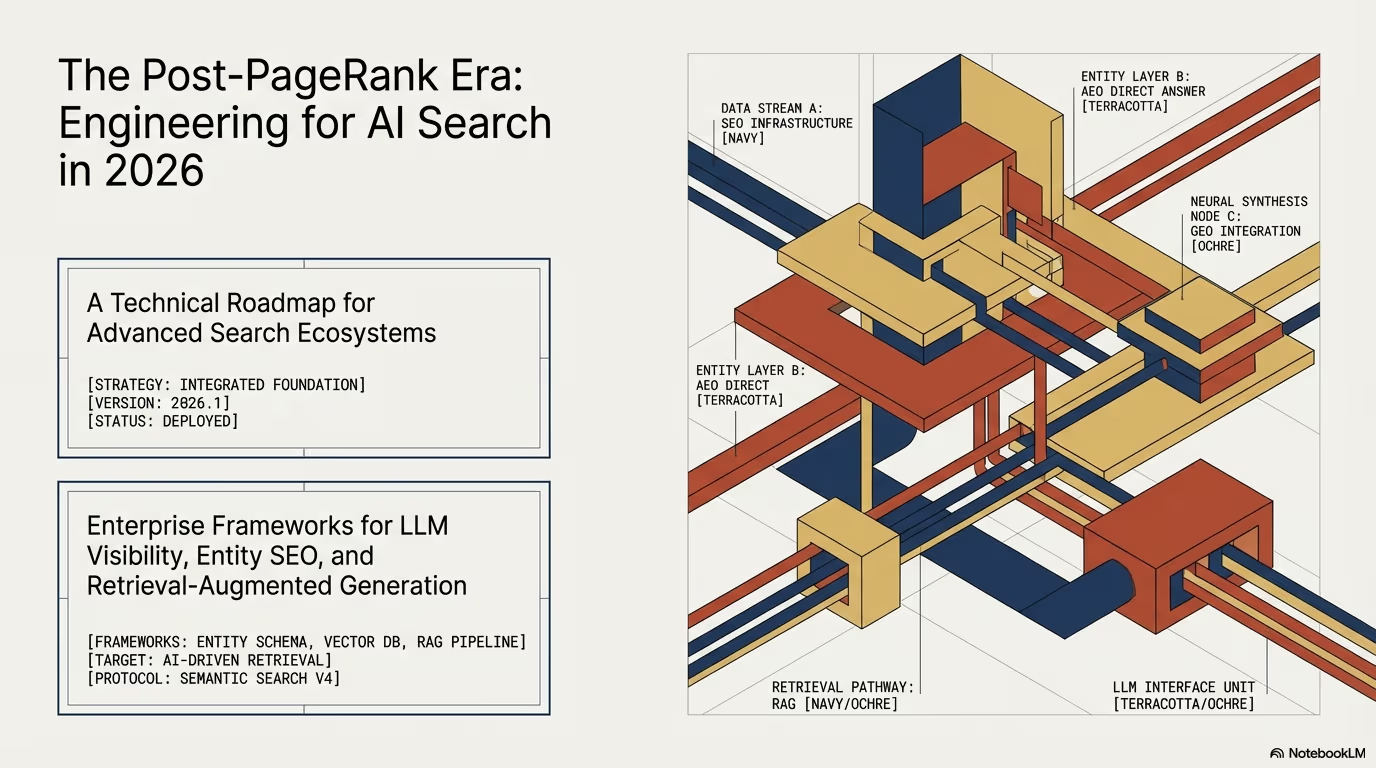

The shift from SEO to GEO

The digital landscape has undergone a tectonic shift. For decades, the blue link was the primary currency of the internet, but that era is over. We have moved beyond the search engine and into the age of the answer engine. Gartner’s projection of a 25% decline in traditional search volume by 2026 has largely materialized, and the evidence is everywhere: users are no longer hunting through ten blue links on a results page. They are having high-context conversations with AI systems that synthesize information and deliver answers directly.

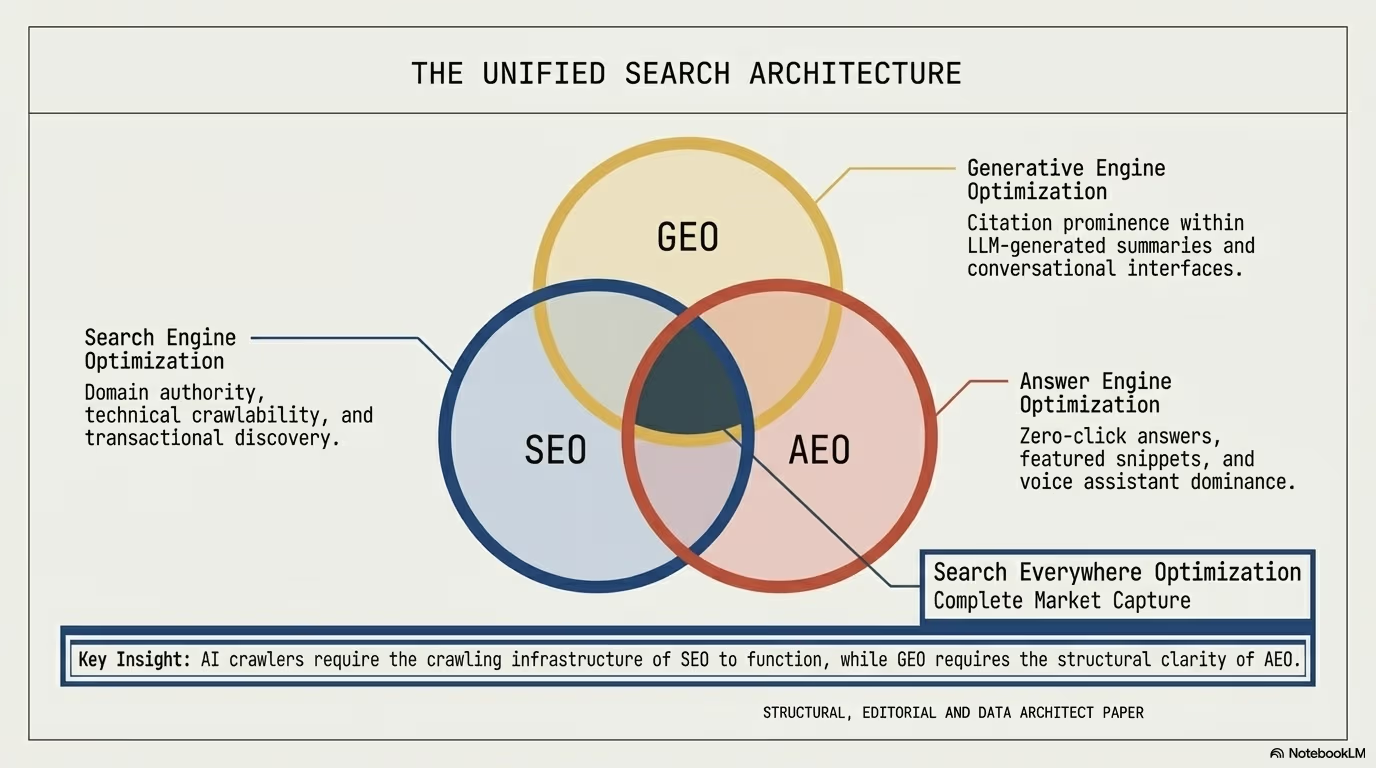

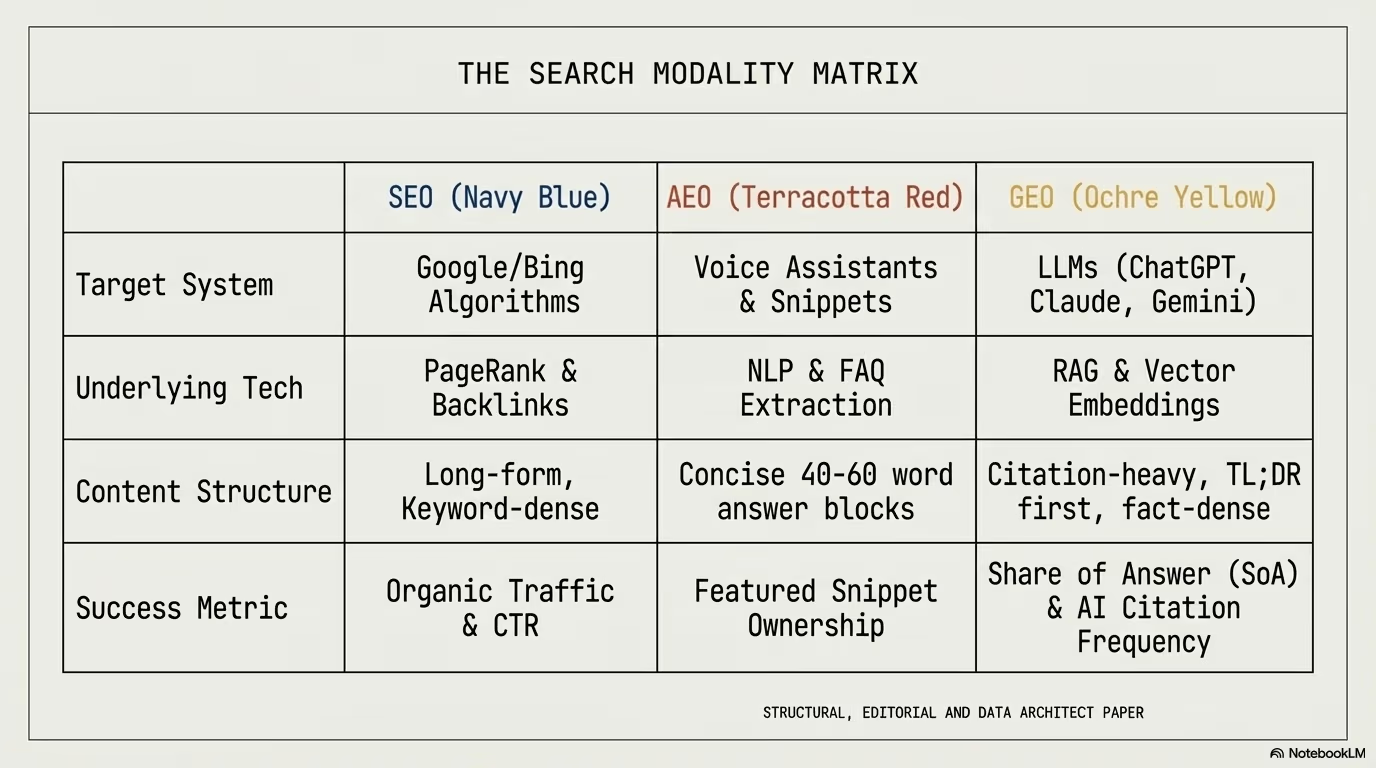

This is the transition from Search Engine Optimization (SEO) to Generative Engine Optimization (GEO). In this new paradigm, visibility is not about ranking number one on a list. It is about becoming the synthesized answer itself. The implications are profound for every business that has invested in traditional search marketing.

With AI-referred traffic growing by a staggering 527% year-over-year, the seven takeaways that follow represent the new laws of survival in an AI-first, zero-click world. Each one is backed by data, and together they form a comprehensive playbook for adapting your digital strategy to the realities of 2026.

The critical difference between SEO and GEO lies in what each system rewards. SEO rewards pages that attract human clicks through compelling titles and meta descriptions. GEO rewards pages that AI systems trust enough to cite as authoritative sources. These are fundamentally different optimization targets, and treating them as the same will leave your content stranded between two paradigms.

1. The “TLDR” rule: citations are won in the first 200 words

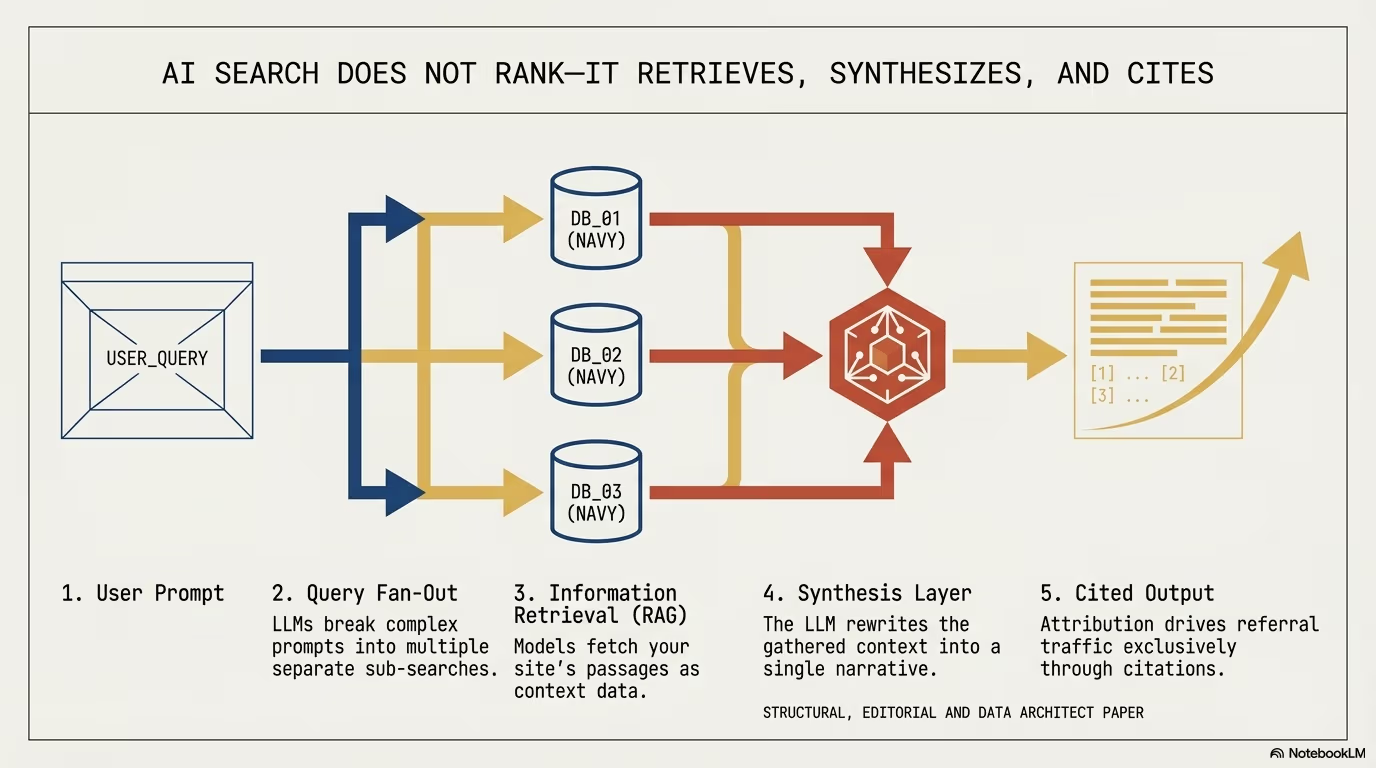

The days of burying the lead to inflate time-on-page metrics are officially dead. Modern AI retrieval systems utilize Retrieval-Augmented Generation (RAG), a process built for extreme efficiency. These systems do not read your entire article from top to bottom. They extract specific passages to feed the model context, and the extraction process heavily favors content that appears early in the document.

Data shows that 44.2% of all verified LLM citations come from the first 30% of a page. This statistic alone should reshape how every content team approaches article structure. If the direct answer is not in your opening paragraphs, your content effectively does not exist to the machine.

To win the citation, you must adopt an “answer-first” architecture: lead with a concise summary under 300 words that directly answers the primary query, then follow with data-rich nuance and supporting evidence. Think of it as writing an executive summary that a machine can extract in isolation and still deliver a complete, accurate answer to the user.

This does not mean your content should be shallow. Quite the opposite. The depth must be there, but the architecture must be inverted. Place your most citation-worthy facts, statistics, and declarative statements in the opening section. Then use the remaining content to provide the context, evidence, and nuance that builds authority.

“Answer the question first, then explain the nuances. This is how you should approach writing for AI search in general: clarity first, depth second.”

The practical implication is that every piece of content needs what might be called a “citation header”: a dense, fact-rich opening section specifically designed for RAG extraction. This section should contain your primary keyword naturally, a direct answer to the target query, and at least two supporting data points. Everything that follows builds on this foundation.

2. The 83% shocker: ranking number one no longer guarantees AI visibility

One of the most disruptive realizations for business leaders is that traditional Google rankings have decoupled from AI citations. The overlap between top-ranking Google links and the sources cited by AI engines has dropped below 20%. This means four out of five AI citations come from sources that would not appear in a traditional top-ten results page.

More shockingly, research from Digital Applied indicates that 83% of AI Overview citations come from pages ranking outside the organic top ten. This statistic demolishes the assumption that good SEO automatically translates to AI visibility. The two systems are evaluating content through fundamentally different lenses.

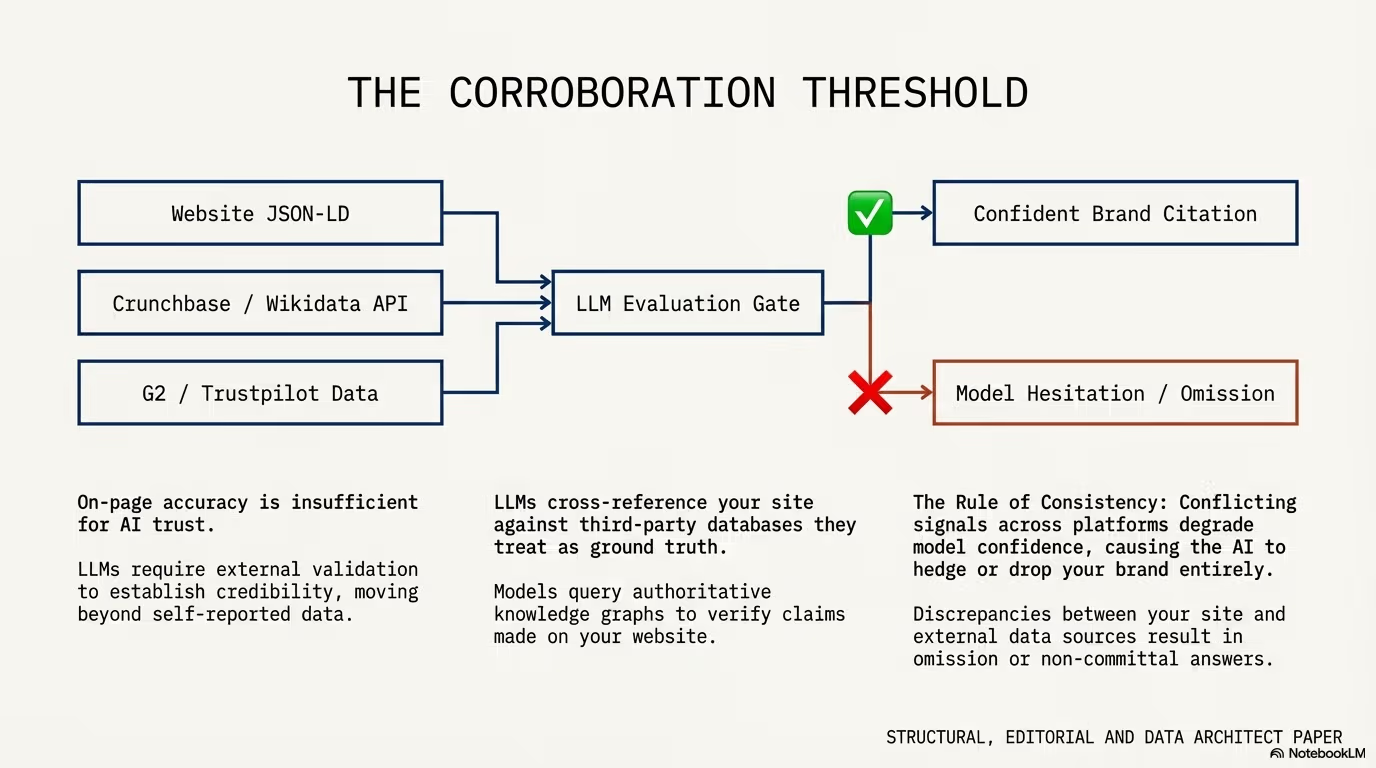

AI models prioritize machine-extractable facts and structured clarity over the backlink-heavy signals of classic SEO. Where Google’s algorithm weighs domain authority, link equity, and user engagement metrics, AI systems evaluate how easily they can extract a verifiable fact, how clearly the content is structured, and how confident they can be in the accuracy of the information.

This decoupling means your “SEO-perfect” site might be invisible to the very AI models now handling your customers’ queries. It also means opportunity: a smaller site with exceptional content structure, clear factual statements, and strong entity markup can outperform a domain authority giant in AI citations.

The practical takeaway is that businesses need parallel optimization strategies. Your SEO efforts should continue, but you must layer GEO-specific optimizations on top: structured data markup, clear factual statements in the first 200 words, entity-level schema implementation, and regular content freshness updates. Running SEO without GEO in 2026 is like optimizing for desktop in 2015 while ignoring mobile.

3. Authority is a web, not a page (the 3.2x multiplier)

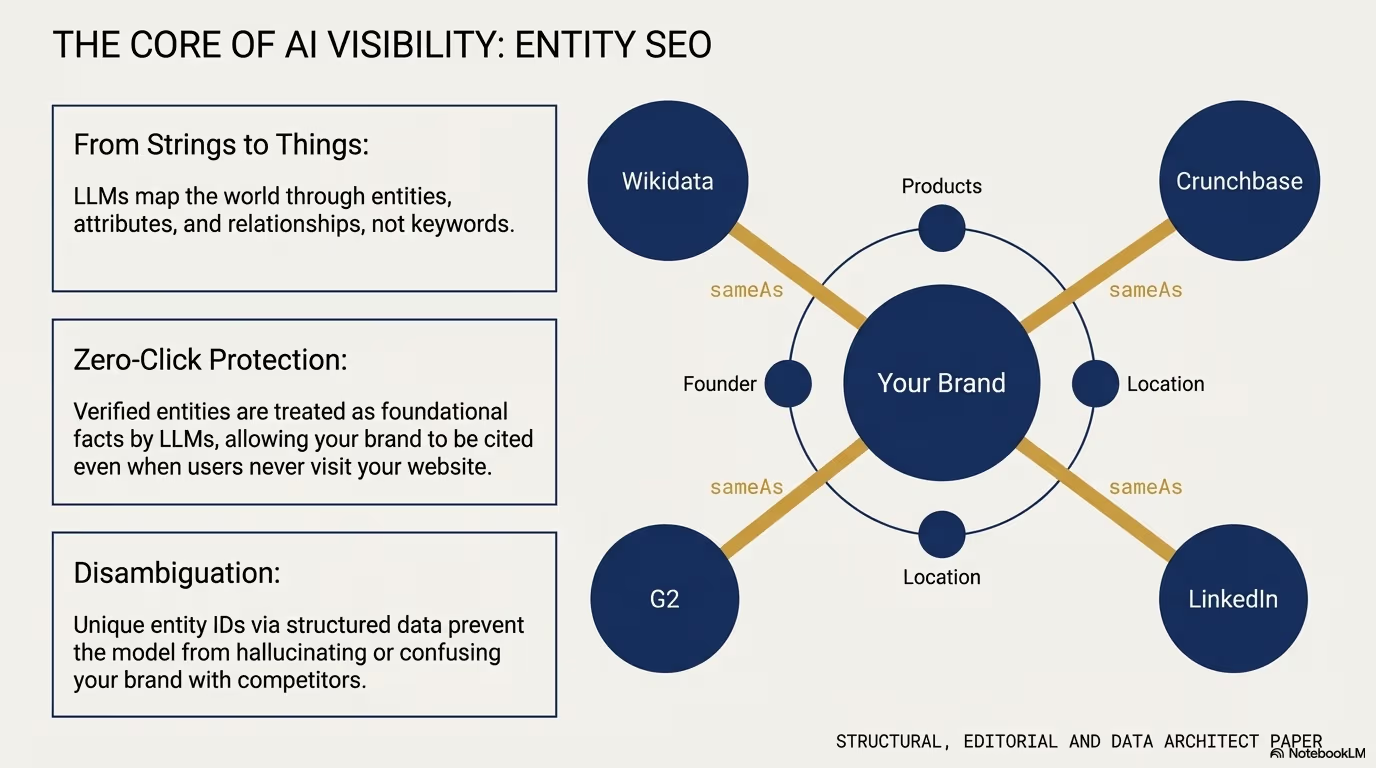

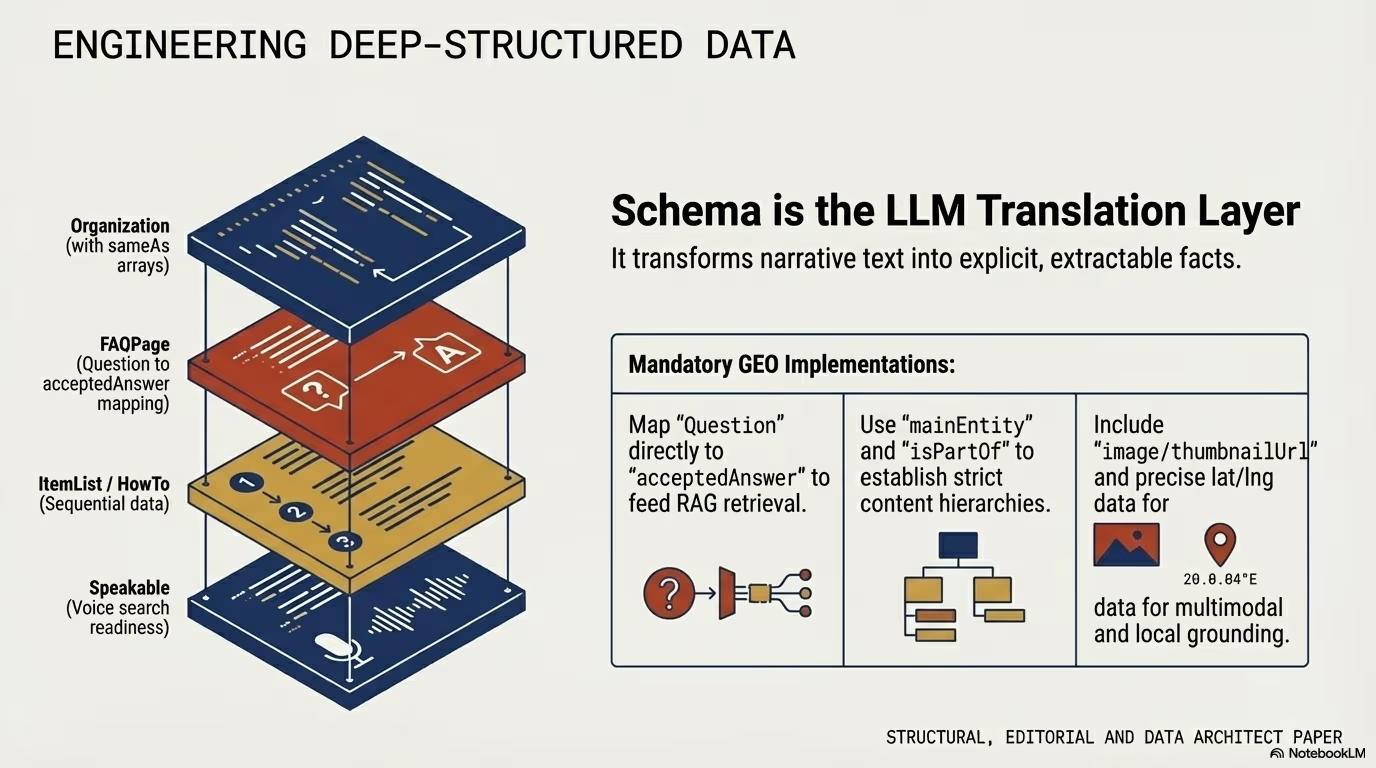

AI engines do not just look for a good page. They verify a Topical Graph Authority. The shift to an entity-first architecture is now the structural foundation of GEO, and the data supporting this is compelling.

Analysis of millions of AI citations confirms that 86% of citations come from sites with five or more interconnected pages on a specific topic. Isolated pages, no matter how well-written, rarely earn AI citations because the generative model cannot verify the broader authority of the source.

Using a pillar-cluster architecture provides a 3.2x boost in citation rates. AI systems need to see a dense network of bidirectional internal links to feel confident enough to cite your brand as a definitive authority. Without this web of context, individual pages remain orphaned and untrusted by generative models.

What does this look like in practice? It means building content ecosystems, not standalone articles. A pillar page covers the broad topic comprehensively, and cluster pages dive deep into subtopics while linking back to the pillar and to each other. The internal link structure creates a semantic web that AI can traverse to verify authority.

For example, a site covering “WordPress security” as a pillar should have interconnected cluster pages on firewall configuration, malware detection, login hardening, SSL implementation, plugin vulnerability scanning, and incident response. Each page links to the others with descriptive anchor text, creating a dense knowledge graph that AI systems can navigate and trust.

The entity-first approach goes further. Every key concept on your site should be linked to its corresponding Wikidata entity, and your structured data should express entity relationships clearly. When an AI system can trace a clear entity graph across your content, it treats your site as a knowledge base rather than a collection of disconnected articles.

4. The 90-day freshness cliff

In the AI era, content has a shorter shelf life than ever. AI systems exhibit a massive recency bias, prioritizing up-to-date information to avoid hallucinations or outdated advice. The data on this is stark and demands attention from every content strategist.

Content updated within 90 days achieves 2x higher citation rates compared to older material. This is not a marginal improvement. It is a binary distinction between content that gets cited and content that gets ignored.

Conversely, content that has not been touched in over 18 months is largely ignored by generative engines, regardless of its historical authority. A page that once earned thousands of backlinks and ranked number one for years can become invisible to AI systems simply because it has not been refreshed with current data and verified facts.

The static “publish and forget” model has been replaced by a mandatory quarterly refresh cycle. If your data is not fresh, the AI will simply find a competitor whose data is. This represents a fundamental shift in content economics: the cost of maintaining content is now as important as the cost of creating it.

Practical steps for maintaining freshness include updating statistics and data points quarterly, adding new sections that address emerging subtopics, refreshing publication and last-modified dates with genuine content changes, verifying that all external links still work and point to current resources, and revising any recommendations or best practices that may have changed since the last update.

The freshness signal is not just about changing a date. AI systems are sophisticated enough to detect substantive updates versus cosmetic changes. A genuine refresh adds new information, updates outdated statistics, and reflects the current state of the topic.

5. AI systems prefer the “legitimacy layer” (the earned media bias)

AI search engines exhibit a systematic and overwhelming bias toward earned media: third-party mentions on authoritative platforms, not brand-owned content. This is the legitimacy layer, and understanding it is crucial for any GEO strategy.

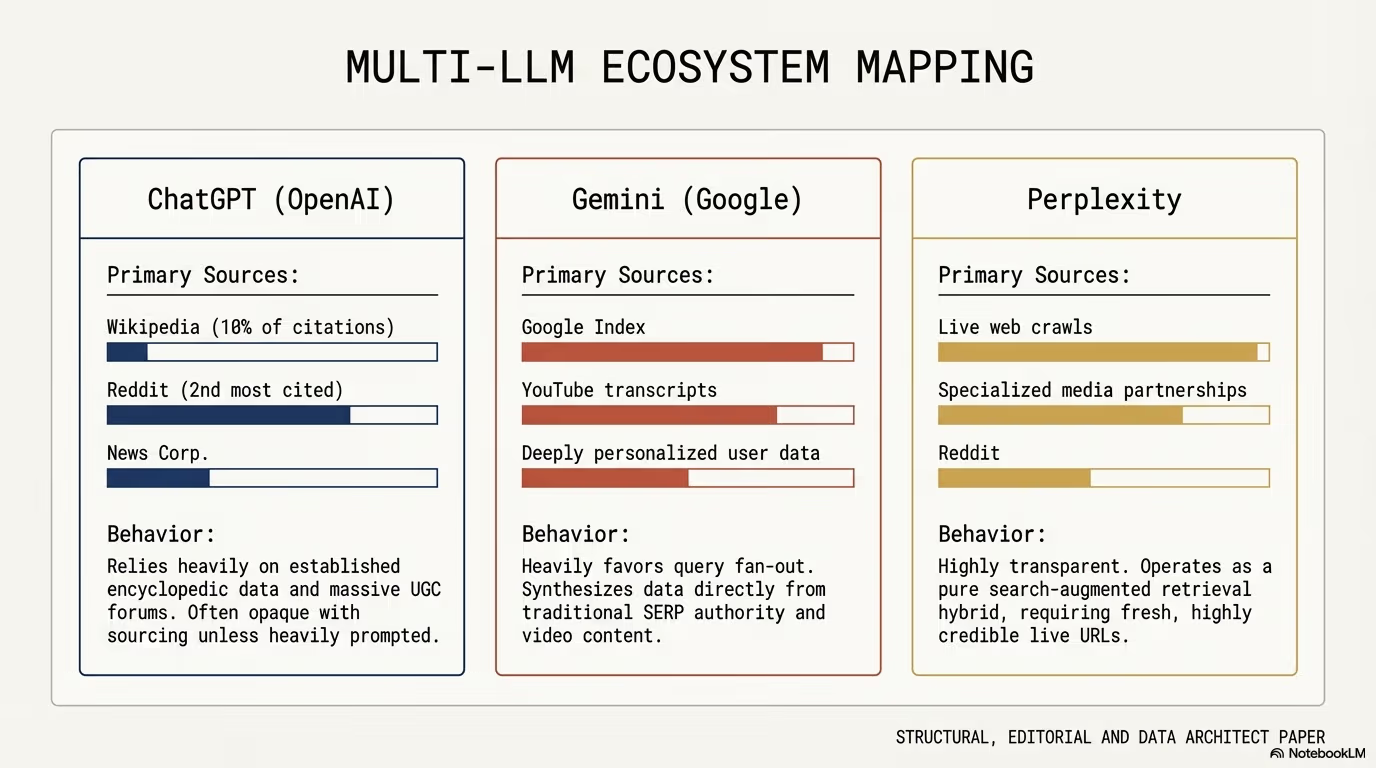

For instance, Wikipedia remains a dominant force in AI discovery, accounting for 7.8% of all ChatGPT citations. This single platform generates more AI citations than most entire corporate websites combined. The second most cited source, Reddit, trails behind but still represents a massive share of AI-referenced content.

To be cited by AI on your own site, you must often first earn mentions on other sites that the AI already trusts, such as Reddit, YouTube, major news outlets, industry publications, and academic repositories. This creates a “zero-click moat” where your presence on high-authority, third-party domains acts as the trust signal required for an AI to eventually justify citing your primary domain.

The mechanism works like this: when an AI model encounters your brand mentioned positively on Wikipedia, Reddit, and industry publications, it builds an internal confidence score for your domain. Once that confidence threshold is crossed, the model becomes willing to cite content directly from your website. Without that third-party validation layer, your first-party content exists in a trust vacuum.

“Wikipedia leads ChatGPT’s citations, with a share of 7.8%, while the second most cited source, Reddit, trails far behind… it serves as a ‘legitimacy layer’ for companies.”

This means digital PR, community engagement, and thought leadership on third-party platforms are no longer “nice to have” brand activities. They are structural requirements for AI visibility. Every mention on an authoritative platform increases the probability that AI systems will trust and cite your primary domain content.

The strategy requires patience. Building the legitimacy layer is a long-term investment, but the compounding returns in AI visibility make it one of the highest-ROI activities in digital marketing today.

6. The /llms.txt “secret handshake”

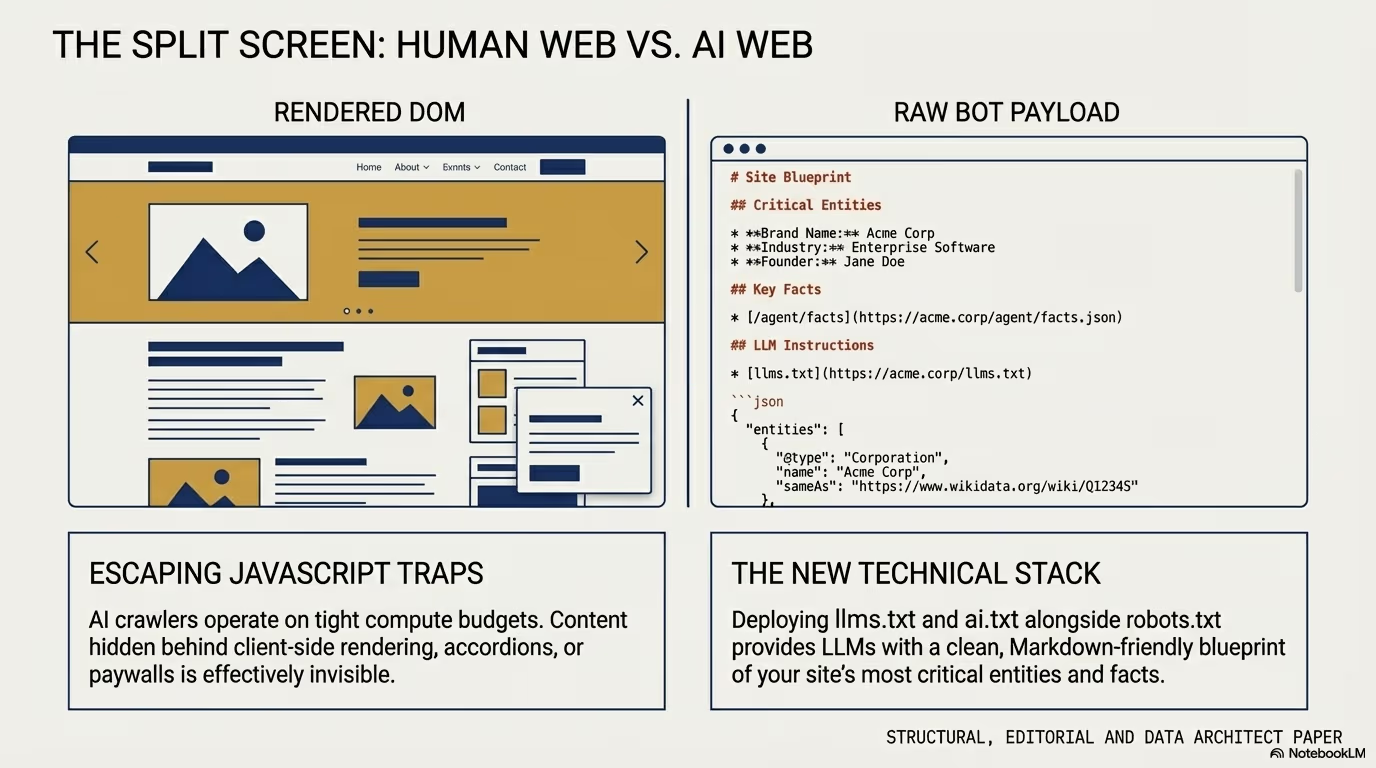

As websites evolve to serve both humans and machines, a new technical standard has emerged: the /llms.txt file. While robots.txt tells crawlers where they can go, /llms.txt provides a curated, Markdown-formatted directory specifically for inference-time use by LLMs. This is a fundamentally different purpose: not controlling access, but actively feeding structured information to AI systems when they need it most.

This file solves the “context window” problem. AI bots often struggle to parse complex HTML, navigation menus, JavaScript-rendered content, and multi-layered page structures. When an AI agent visits your site during a live conversation, it has a limited context window and limited time to extract relevant information. A clean, machine-readable summary solves this problem elegantly.

By providing a structured /llms.txt file, you allow AI agents to ingest your expert-level information instantly during a user conversation. The file typically includes your site’s core expertise areas, key factual claims, primary content categories, and links to your most authoritative pages, all formatted in clean Markdown that any LLM can process without parsing overhead.

This secret handshake is becoming as essential as the sitemap was for the previous era. Forward-thinking sites are already implementing complementary files: /llms.txt for structured content summaries, /ai-sitemap.xml for AI-specific content discovery, and structured JSON feeds like /ai-training-data.json that provide machine-optimized content to any AI system that requests it.

The implementation is straightforward. Create a Markdown file at your domain root that describes your site’s expertise, lists your most important content with brief summaries, and provides clear factual statements that AI models can extract and cite. Update it whenever you publish significant new content. Think of it as your site’s resume for AI systems: concise, factual, and structured for machine consumption.

Sites that implement this standard report measurably higher AI citation rates, particularly for queries where multiple sources compete for the same answer. The /llms.txt file gives your content a structural advantage in the extraction process.

7. Intent over precision: the power of “query fan-out”

AI is powered by Natural Language Processing (NLP), which prioritizes intent matching over keyword density. The way users search has fundamentally changed, and the data illustrates the magnitude of this shift.

Traditional typed searches are abbreviated fragments averaging 4 words. A user might type “best WordPress security plugins 2026.” AI queries are conversational and detailed, averaging 23 words. The same user tells an AI: “I’m running a WooCommerce store on shared hosting and I’m worried about security. What plugins should I install to protect against the most common attacks without slowing down my site?”

Users now provide full situational context in their prompts. They describe their specific situation, constraints, preferences, and goals. In response, AI engines perform “query fan-out,” breaking one complex question into multiple sub-queries to find the best answer. That single 23-word question might generate five or six internal sub-queries, each searching for different aspects of the answer.

Content that sounds robotic or keyword-stuffed is penalized in this process. Writing like a real person to a real person is now a technical optimization, not just a stylistic preference. It ensures your content aligns with the semantic intent the AI is trying to satisfy during its fan-out process.

The practical implication is significant. Instead of optimizing for short keyword phrases, you should optimize for the situations and contexts that users describe in their AI conversations. This means writing content that addresses specific scenarios, acknowledges constraints, and provides nuanced recommendations rather than generic advice.

Content structured around “If you are in situation X, then the best approach is Y because Z” performs far better in AI citation than content structured around “The best approach is Y.” The situational context gives the AI confidence that your answer applies to the specific user question it is processing.

This also means long-tail, question-based content is more valuable than ever. FAQ sections, detailed how-to guides, and scenario-specific recommendations give AI systems exactly the structured, situation-aware content they need to generate accurate, cited answers.

Becoming the answer

In 2026, visibility is no longer about being one of ten links on a page. It is about being the definitive answer across a fragmented landscape of LLMs. Whether it is ChatGPT, Gemini, or Perplexity, these systems are looking for structured, fresh, and authoritative content that they can justify to their users.

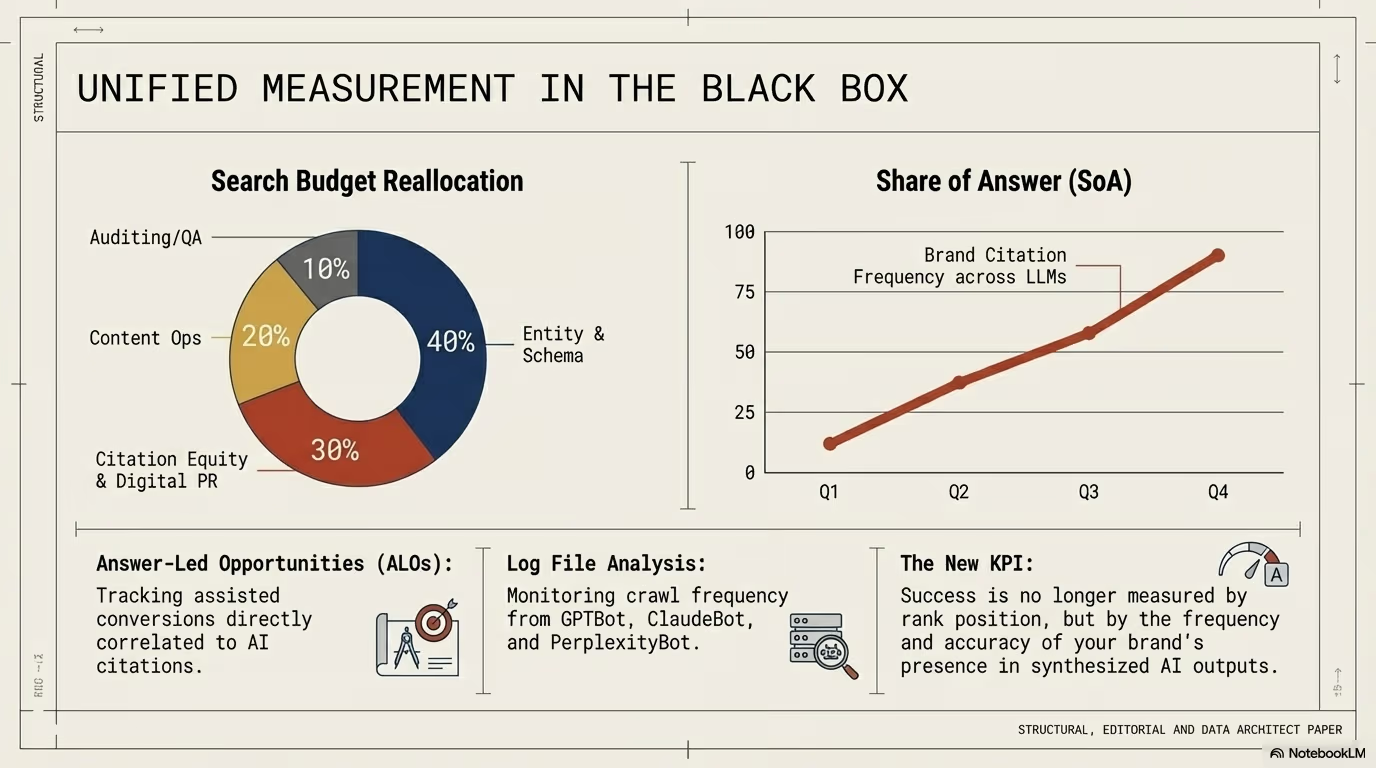

The transition to GEO requires a pivot from chasing clicks to owning the citation. This is not merely a tactical adjustment. It is a strategic reorientation that affects content creation, technical infrastructure, digital PR, and performance measurement.

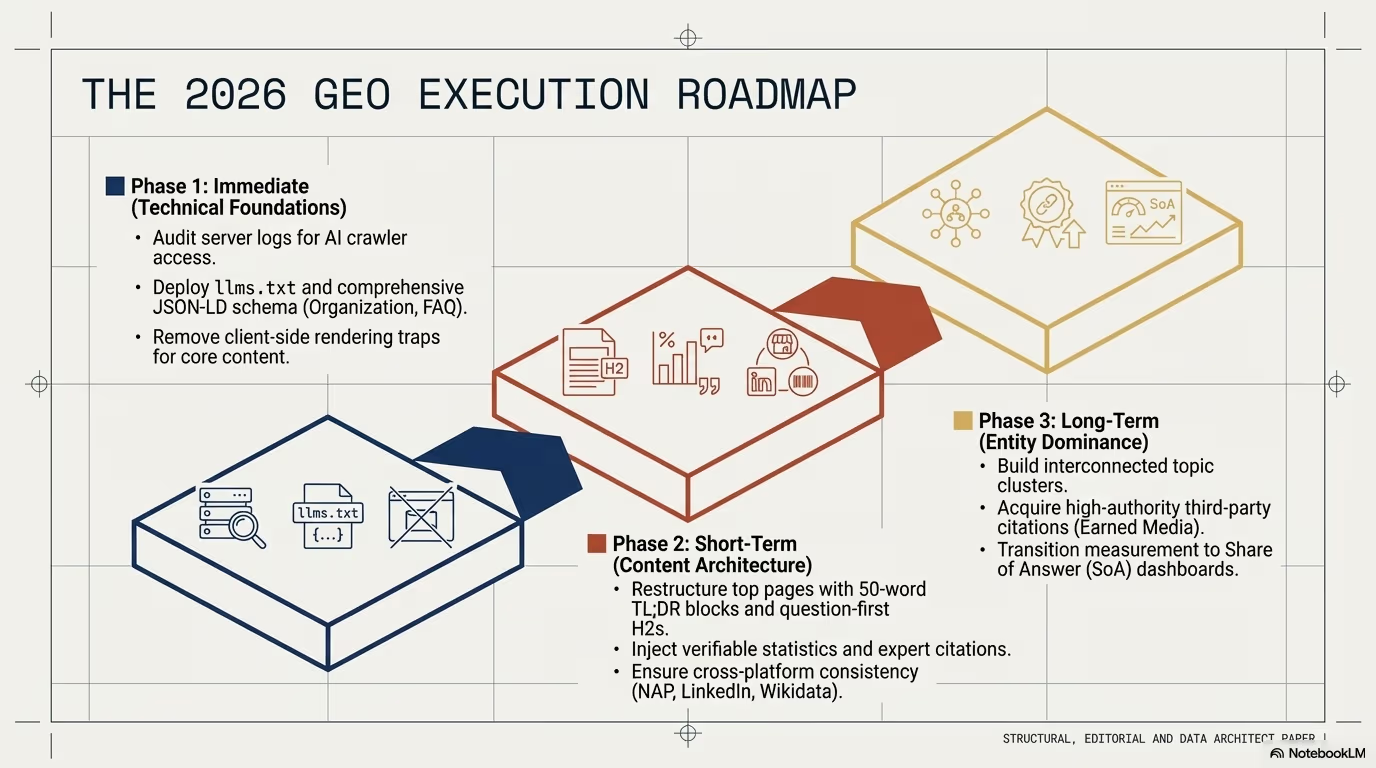

The seven truths outlined in this article form an actionable framework:

- Structure content for extraction: Put your most citation-worthy facts in the first 200 words and build an answer-first architecture that RAG systems can efficiently process.

- Decouple your strategies: Recognize that SEO and GEO are parallel but distinct optimization targets, and invest in both.

- Build topical authority webs: Create interconnected content ecosystems with five or more pages per topic cluster, connected by bidirectional internal links and entity markup.

- Maintain a 90-day refresh cycle: Treat every piece of content as a living document that requires quarterly updates with genuine new information and verified data.

- Invest in the legitimacy layer: Build presence on third-party authoritative platforms that AI systems already trust, creating the confidence signals needed for direct citation.

- Implement the /llms.txt standard: Give AI systems a clean, structured entry point to your expertise by providing machine-optimized content summaries.

- Write for intent, not keywords: Create situation-aware content that matches the conversational, contextual queries users bring to AI systems.

As we move deeper into this AI-first era, business leaders must confront a new reality of measurement and value. If a user never clicks through to your website but gets your brand’s answer from an AI, did you still win, and how are you going to measure it?

The businesses that answer this question first will define the next decade of digital strategy. New metrics are emerging: citation frequency across AI platforms, brand mention sentiment in AI-generated responses, entity confidence scores, and answer ownership rates for key queries. These metrics will eventually become as standard as organic traffic and conversion rates are today.

The end of the click is not the end of visibility. It is the beginning of a new kind of influence, one where being the trusted source that AI cites is more valuable than being the link that a user clicks. The organizations that understand this shift and act on it now will own the most valuable real estate in the digital economy: the answer itself.